Registers a global forward hook for all the modules Registers a forward pre-hook common to all modules. Non-linear Activations (weighted sum, nonlinearity)ĭataParallel Layers (multi-GPU, distributed)Ī kind of Tensor that is to be considered a module parameter.īase class for all neural network modules. These are the basic building blocks for graphs:

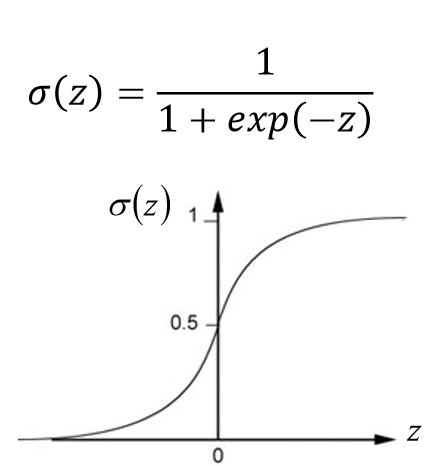

Extending torch.func with autograd.Function.CPU threading and TorchScript inference.CUDA Automatic Mixed Precision examples.Therefore, to identify the best settings for our unique use case, it is always a good idea to experiment with alternative loss functions and hyper-parameters. While cross-entropy loss is a strong and useful tool for deep learning model training, it's crucial to remember that it is only one of many possible loss functions and might not be the ideal option for all tasks or datasets. To summarize, cross-entropy loss is a popular loss function in deep learning and is very effective for classification tasks. Line 24: Finally, we print the manually computed loss. Line 21: We compute the cross-entropy loss manually by taking the negative log of the softmax probabilities for the target class indices, averaging over all samples, and negating the result. Line 18: We also print the computed softmax probabilities. Line 15: We compute the softmax probabilities manually passing the input_data and dim=1 which means that the function will apply the softmax function along the second dimension of the input_data tensor. The labels argument is the true label for the corresponding input data. The input_data argument is the predicted output of the model, which could be the output of the final layer before applying a softmax activation function. Line 9: The TF.cross_entropy() function takes two arguments: input_data and labels. The tensor is of type LongTensor, which means that it contains integer values of 64-bit precision. Line 6: We create a tensor called labels using the PyTorch library. Line 5: We define some sample input data and labels with the input data having 4 samples and 10 classes.

Line 2: We also import torch.nn.functional with an alias TF.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed